Kasey Cromer, Netlok | March 31, 2026

Executive Summary

Traditional authentication was designed to answer one question: should this login succeed? It was not designed to ask whether the person behind the login is safe. In 2026, that gap is becoming a liability.

Attackers are no longer just stealing credentials. They are threatening employees in person, harvesting login data by watching screens, and exploiting the sheer complexity of enterprise identity systems to slip through undetected. The threats have become personal, but the defenses have not kept pace.

This blog examines three 2026 realities that 1) demands a “protect the person, not just the account” mindset; 2) explains why traditional authentication falls short, and; 3) outlines what security leaders can do now to close the gap.

Three 2026 Realities Security Leaders Must Address

1. Protecting the person and account

2. Why Traditional Authentication Falls Short

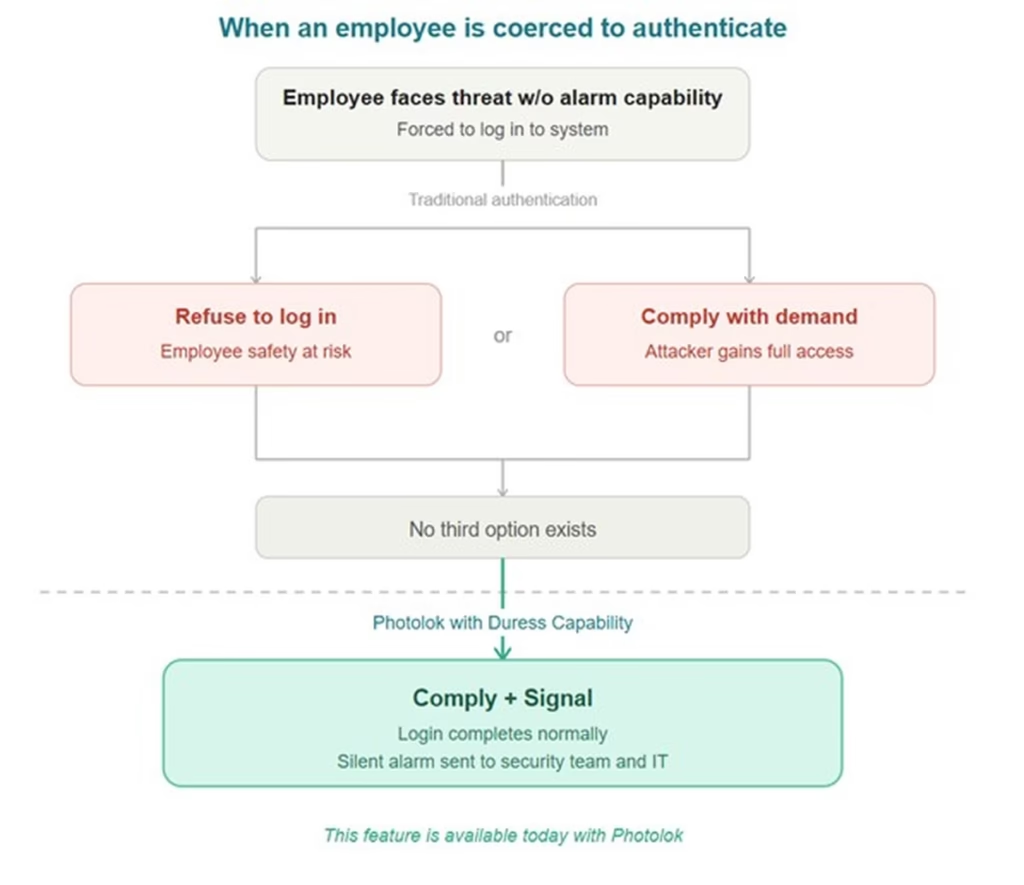

Traditional authentication systems were built to verify identity, not to protect the person behind it. They have no mechanism for an employee to signal that they are being coerced. They rely on credentials that can be observed, captured, and replayed. They add friction through multiple factors without reducing the underlying exposure.

The core assumption that the person logging in is doing so freely and privately, no longer holds in many real world scenarios. When a teller is threatened, when a phone is stolen, when someone watches you type your password in an airport, traditional MFA does nothing to help.

3. How Photolok Addresses the “Protect the Person” Gap

Photolok Passwordless IdP was designed with these realities in mind. It replaces passwords with photo based authentication that addresses coercion, observation, and complexity at the identity layer:

Figure: Traditional authentication offers only two paths during coercion. Photolok creates a third.

Because Photolok sits at the identity provider and authentication layer, it complements existing security controls without requiring a redesign of downstream systems.

What Security Leaders Should Do Now

Establish duress protocols across security, IT, HR, and physical safety teams. Coercion scenarios do not fit neatly into IT incident response. Work with HR, legal, and workplace safety stakeholders to define what happens when an employee signals distress during authentication. Who gets notified? What access gets constrained? How is the employee protected?

Add coercion and device theft scenarios to incident response playbooks. Most playbooks cover phishing and malware. When an employee is physically threatened, the first priority is personal safety, followed by immediately disabling their account access and coordinating across security, HR, and physical safety teams. When a laptop is stolen with active sessions, response must happen in minutes: log out all devices, reset passwords, and remotely erase the device. Document the response steps now, before you need them.

Implement a credential reset policy after travel or public exposure. Employees who work in airports, conferences, coffee shops, or client sites are at elevated risk for shoulder surfing. Consider requiring credential rotation or one time use authentication for sensitive systems after travel.

Review remote wipe and device lockdown procedures. When a phone or laptop is stolen, how quickly can you revoke access? Test your identity provider integrations to ensure you can lock out a compromised device within minutes, not hours.

Evaluate whether your authentication gives employees any way to protect themselves. Ask a simple question: if an employee is being coerced right now, does your authentication system give them any option other than compliance or refusal? If the answer is no, explore solutions that build human safety into the authentication flow.

The Bottom Line

In 2026, identity is personal. Attackers target people, not just accounts, through coercion, device theft, and the noise of sprawling identity systems. Traditional authentication was built to decide whether a login should succeed, not whether the human behind it is safe.

The organizations that adapt will be those that treat “protect the person, not just the account” as a design principle for every authentication decision they make.

Request Your Personalized Demo

About the Author

Kasey Cromer is Director of Customer Experience at Netlok.

Sources

[1] CENTEGIX. “Healthcare Safety Trends Report 2026.” centegix.com

[2] Campus Safety. “How Wearable Panic Buttons Will Improve Hospital Workplace Safety in 2026.” campussafetymagazine.com

[3] Crisis24. “Increasing Rates of Phone Thefts Worldwide Pose Significant Data Security Risks.” crisis24.com

[4] Kensington. “Study Highlights Prevalence of Device Theft and the Impacts on Data Security.” kensington.com

[5] SpyCloud. “2026 Identity Exposure Report.” prnewswire.com

[6] 1Password. “Credential Sprawl: How AI Increases the Risks.” 1password.com

[7] GitGuardian. “The State of Secrets Sprawl 2026.” gitguardian.com

[8] Avatier. “Passwordless Security Based Systems.” avatier.com

[9] Thales. “2026 Data Threat Report.” cpl.thalesgroup.com

[10] Netlok. “How Photolok Works.” netlok.com

Kasey Cromer, Netlok | March 18, 2026

Executive Summary

The identity and authentication methods that enterprises rely on today were not designed for AI powered attackers. Deepfakes now defeat facial recognition at scale. Voice clones bypass call center verification in seconds. AI generated phishing harvests credentials faster than security teams can respond. What worked five years ago is now a liability.

This is not a future threat. It is happening now in 2026. As Gartner anticipated, 30 percent of enterprises now consider face biometrics unreliable in isolation due to AI generated deepfakes. Deepfake fraud attempts have surged over 3,000 percent since 2022. Voice cloning attacks increased 680 percent in the past year alone. The authentication crisis is here.

Security leaders and boards need to understand that legacy identity and authentication has become a material enterprise risk. Photolok Passwordless IdP and authentication offers an alternative designed for this threat landscape, replacing passwords and biometrics with photo based identity and authentication that gives AI attackers nothing to clone, nothing to phish, and nothing to replay.

The Authentication Crisis: What AI Has Changed

AI has fundamentally broken the assumptions behind traditional identity and authentication. The methods enterprises have relied on for decades, passwords, one time codes, facial recognition, and voice verification, all assume that attackers are human and that fakes are easy to spot. Neither assumption holds in 2026.

Deepfakes are defeating facial recognition. Attacks using face swap deepfakes to bypass biometric authentication have increased over 700 percent in recent years, and the problem continues to accelerate. The volume of deepfakes shared online has grown 16 fold in just two years, reaching an estimated 8 million in 2025 (Fortune). In Q1 2025 alone, financial losses from deepfake enabled fraud exceeded $200 million in North America. The Arup incident, where a finance worker was tricked into wiring $25 million after a video call with deepfake executives, demonstrated that attackers can now fabricate entire multi person video conferences. When shown high quality deepfake videos, humans correctly identify them as fake only 24.5 percent of the time.

Voice clones are bypassing verification. Voice cloning now requires just three seconds of audio to produce a convincing replica, complete with natural intonation, emotion, and breathing patterns. Voice deepfakes rose 680 percent in the past year (Pindrop). AI generated voice scams have surged 148 percent in 2025, with major retailers reporting over 1,000 AI scam calls per day. Synthetic voices no longer carry the obvious flaws that once made them easy to detect. CEO fraud using voice clones now targets at least 400 companies daily.

AI generated phishing is harvesting credentials at unprecedented scale. AI crafted phishing emails achieve 54 percent click through rates compared to 12 percent for traditional phishing, making them 4.5 times more effective (Microsoft Digital Defense Report). Microsoft estimates AI can make phishing operations up to 50 times more profitable through higher engagement and automation efficiency. The FBI has warned that AI greatly increases the speed, scale, and automation of phishing schemes. Over the holiday season in late 2025, AI generated phishing attacks surged 14 fold, representing 56 percent of all reported phishing attacks (Hoxhunt).

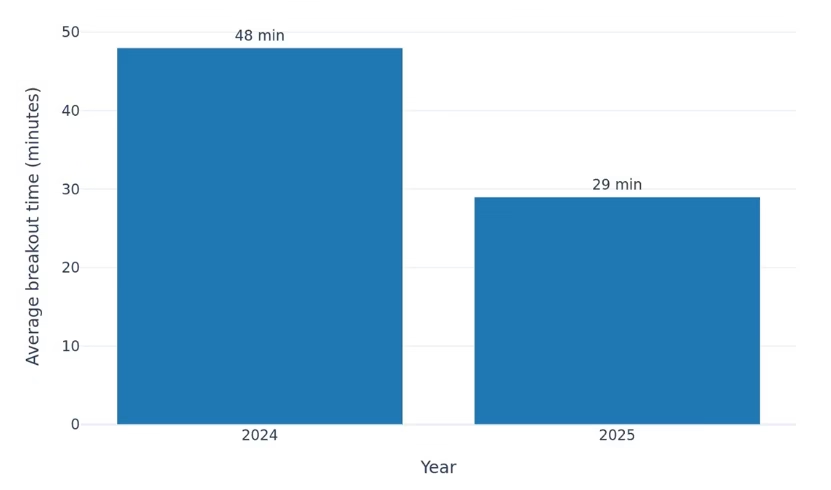

The speed and scale of AI attacks outpace human defenses. AI tools allow threat actors to accelerate reconnaissance, create convincing phishing messages, and scale their operations far beyond what was previously possible (CrowdStrike 2026 Global Threat Report). Average breakout time for cyber intrusions has collapsed to just 29 minutes, with the fastest observed at 27 seconds from initial access to lateral movement. By the time security teams detect an incident, the damage is often done.

Figure: Average Breakout Time Is Shrinking — CrowdStrike 2026 Global Threat Report.

Average Breakout Time Is Shrinking

Why Traditional Defenses Are Failing

Passwords remain the dominant login method, and they are still easily compromised. The Verizon 2025 DBIR found that stolen credentials were the initial access vector in 22 percent of breaches, more than any other category. In basic web application attacks, 88 percent involved stolen credentials. Analysis shows that only 3 percent of compromised passwords met basic complexity requirements. Credential stuffing now accounts for 19 percent of all authentication attempts at the median enterprise, rising to 25 percent at large organizations.

SMS and app based one time codes are vulnerable at every step. SIM swapping, real time phishing, and social engineering all defeat these controls. Prompt bombing, where users are bombarded with MFA requests until they approve one out of frustration, appeared in 14 percent of incidents in the 2025 DBIR. Adversary in the middle attacks intercept both passwords and session tokens after legitimate MFA authentication. Phishing as a service kits like Tycoon2FA and EvilProxy are specifically designed to bypass modern MFA controls.

Biometrics, once seen as the answer, are now being defeated by synthetic media. Deepfakes now account for 40 percent of all biometric fraud attempts. One in 20 identity verification failures in 2025 is linked to deepfake usage (Keepnet Labs). Attackers use face swap deepfakes and inject pre recorded or real time manipulated video streams via virtual cameras to fool liveness detection. The technology gap between attack and defense is widening.

The core issue is that these methods assume attackers are human. When AI can perfectly replicate a face, a voice, or a writing style, authentication that relies on “something you are” or “something you know” becomes fundamentally compromised. Enterprises need identity and authentication that AI cannot fake.

Why Photolok Addresses the AI Identity and Authentication Threat

Photolok is not another point solution. It is a Passwordless Identity Provider (IdP) and authentication server that functions as the front door for your apps and systems. It is not a SaaS product. Photolok works with existing systems including Okta Workforce and other identity platforms. As an identity provider, Photolok verifies user identities before granting access to any application. By replacing passwords at this identity layer, Photolok secures authentication across every app and system where your employees use Photolok. The apps themselves never see or store credentials. They simply trust Photolok’s verification.

What makes Photolok different is that it gives AI attackers nothing to work with:

Because Photolok sits at the identity provider layer, it complements existing fraud analytics, transaction monitoring, and security controls.

What Security Leaders Should Do Now

The Bottom Line

AI has broken the identity and authentication model that enterprises have relied on for decades. Passwords are stolen at scale. Biometrics are defeated by deepfakes. Voice verification falls to cloning. Attacks now unfold in minutes, not hours. The methods designed for human attackers cannot withstand AI powered adversaries.

The strategic response is to adopt authentication that gives AI nothing to exploit. Photolok Passwordless IdP replaces passwords and biometrics with photo based, session specific identity and authentication that cannot be cloned, or replayed. It integrates with existing platforms like Okta Workforce.

Want to see how Photolok can protect your organization against AI powered authentication attacks?

Request Your Personalized Demo

About the Author

Kasey Cromer is Director of Customer Experience at Netlok.

Sources

[1] Gartner. “Predicts 30% of Enterprises Will Consider Identity Verification Unreliable Due to Deepfakes by 2026.” gartner.com

[2] Verizon. “2025 Data Breach Investigations Report.” verizon.com/dbir

[3] Fortune. “2026 Will Be the Year You Get Fooled by a Deepfake.” December 2025. fortune.com

[4] Pindrop. “2025 Voice Intelligence and Security Report.” pindrop.com

[5] Microsoft. “Digital Defense Report 2025.” microsoft.com

[6] CrowdStrike. “2026 Global Threat Report.” crowdstrike.com

[7] Keepnet Labs. “Deepfake Statistics and Trends 2026.” keepnetlabs.com

[8] Hoxhunt. “Phishing Trends Report 2026.” hoxhunt.com

[9] World Economic Forum. “Global Cybersecurity Outlook 2025.” weforum.org

[10] Netlok. “How Photolok Works.” netlok.com

Kasey Cromer, Netlok | February 28, 2026

Executive Summary

“Pig butchering” refers to scams where fraudsters build trust over weeks or months before steering victims into fake investment schemes, “fattening” them with false gains before the “slaughter” when scammers empty accounts and disappear (TRM Labs). Pig butchering has evolved from fringe consumer crypto fraud into an industrialized scam industry that steals billions globally each year.

These operations increasingly target employees with access to corporate funds and data. What once looked like a consumer romance problem has become a material enterprise risk that blends payment fraud, business email compromise, and targeted social engineering. Traditional controls relying on users spotting red flags or password centric authentication are struggling against well resourced adversaries operating at global scale with near zero enforcement risk (Huntress).

Security leaders need to treat pig butchering as a systemic identity and payments problem, not merely a user awareness issue. That means reducing the blast radius when employees are socially engineered. Leaders need to assume that scammers will eventually obtain credentials or convince someone to approve a transaction and need to adjust accordingly. Photolok Passwordless IdP helps close this gap by taking passwords off the table and making it significantly harder for scammers to steal or manipulate their way into your systems.

The Pig Butchering Threat Landscape

Pig butchering operations combine relationship building, fake investment platforms, and crypto infrastructure. Scammers cultivate trust over weeks or months across messaging apps, dating platforms, and social networks before steering victims into high yield “opportunities” that are actually scam websites or apps they control. Once funds are deposited, money moves quickly through crypto infrastructure designed to obscure its origins, often crossing multiple jurisdictions in volumes that are difficult to trace in real time or, for that matter, over time.

The scale and professionalization are hard to ignore. The FBI IC3 reported a record $16.6 billion in total cybercrime losses in 2024, an increase of about 33 percent compared to 2023. TRM Labs notes that nearly 150,000 IC3 complaints in 2024 involved digital assets, with $9.3 billion in losses tied to crypto enabled fraud. Of that $9.3 billion, approximately $5.8 billion (62 percent) came from cryptocurrency investment scams. Pig butchering is the largest driver of this category.

Blockchain analytics show that pig butchering remains a dominant component of crypto scam activity. Chainalysis reports that pig butchering revenue in 2024 grew nearly 40 percent year over year and that the number of victim payments to scammers grew by almost 210 percent. At the same time, the average deposit amount declined by more than half, which suggests that scammers are widening the victim pool and accepting smaller amounts in exchange for more total victims.

The infrastructure behind pig butchering has become a service industry. Researchers describe “pig butchering as a service” in Southeast Asia where providers sell kits with preregistered SIM cards, stolen social media accounts, fake finance apps, and multilingual scripts for scam workers. These offerings remove much of the overhead of building scams and lower the entry barrier for new actors. A 2025 US Treasury action against Funnull Technology revealed that one company’s infrastructure hosted hundreds of thousands of domains used in crypto investment fraud, including pig butchering schemes.

For executives, the picture is clear. Pig butchering is no longer a niche romance scam that only affects consumers. It is a professionalized fraud ecosystem that blends human trafficking, social engineering, crypto infrastructure, and scalable technology, and it increasingly touches employees and customers who interact with your organization’s money and systems.

Why Traditional Defenses Are Failing

Many organizations still treat pig butchering primarily as a consumer issue. That framing creates blind spots in enterprise risk management and identity strategy.

User awareness by itself is not enough. Scam operators use detailed scripts, share playbooks, and increasingly rely on generative AI to craft realistic personas in multiple languages. They build rapport across personal channels such as WhatsApp, Telegram, dating apps, and social media long before a victim’s work identity is even mentioned. By the time a fraudulent “investment opportunity” appears, the victim may feel a strong emotional bond and is less likely to question unusual requests. CNBC reports that AI is accelerating these scams by enabling scammers to operate in multiple languages and at greater scale than ever before.

Controls tend to focus on channels rather than relationships. Security teams invest heavily in email filtering, secure web gateways, and endpoint protection, but pig butchering conversations often never touch corporate email or networks. The scam starts on personal channels. By the time fraudsters ask employees to move funds or share access, the request bypasses corporate email and security tools entirely.

Authentication remains easy to observe and easy to coerce. Once a victim trusts the scammer, the adversary needs one of three outcomes: the person sends money, shares credentials, or approves an action. Passwords can be phished through fake login pages that resemble investment or banking portals. SMS codes can be requested “for verification” and entered by the scammer in real time. The FBI notes that scammers increasingly coach victims through authentication steps in real time, turning even multi factor authentication into a vulnerability when the user is complicit. Even stronger methods such as passkeys or biometrics can be abused when a victim is persuaded that an approval is safe, routine, or urgently required.

Fraud and security functions are often siloed. Fraud teams monitor anomalies in payment flows and counterparties. Security teams monitor logins, session behavior, and application access. Pig butchering cases frequently straddle both domains. A payment might be technically authorized from a familiar device, but the authorization itself is the product of social engineering. When fraud and security teams don’t share data, these incidents get written off as legitimate user decisions instead of organized crime.

Law enforcement is scaling up but cannot keep pace. The Department of Justice announced the largest ever seizure of funds related to crypto confidence scams in 2025, yet the cross border nature of these networks means many operators still face minimal consequences. For security leaders this reinforces a core design assumption: your defenses must work even when the external environment remains saturated with pig butchering operations.

Traditional perimeter controls and awareness campaigns are necessary but insufficient. You need to redesign how high value identity and payment flows work so that even a socially engineered user cannot easily hand over reusable secrets or authorize high impact actions.

Why Photolok Addresses the Pig Butchering Landscape

Once you see where traditional controls fall short, the answer is to strengthen the one layer pig butchering cannot bypass: identity.

Pig butchering succeeds when scammers can convert social trust into access. That access may be to cash, credentials, or systems. The strategic question is how to reduce damage when an employee trusts the wrong person.

Photolok is not another point solution. It is a Passwordless Identity Provider (IdP) that functions as the front door for your apps and systems. It works with existing systems including Okta Workforce and other identity platforms. As an identity provider, Photolok verifies who users are before granting access to any application. By replacing passwords at this identity layer, Photolok secures authentication across every app and system your employees use. The apps themselves never see or store credentials. They simply trust Photolok’s verification.

• Steganographic photo based authentication with AES 256 encryption. Photolok embeds encrypted codes inside photos. Each session generates a new AES 256 key that is never presented as a visible password or one time code. Users authenticate by selecting photos they recognize rather than typing secrets.

• Randomized recognition challenges. Photolok presents a different set of photos and challenge patterns each session. There is no fixed credential or predictable sequence for attackers to script against. Even when scammers coach a victim through authentication on a live call, they get nothing they can use again.

• Device approval and fingerprinting. Photolok lets organizations control which devices may authenticate. Combined with device fingerprinting, this prevents logins from unknown endpoints even if scammers convince a victim to attempt access from unfamiliar devices.

• Situational security with Duress Photo and 1 Time Photo. The Duress Photo allows a user to appear to authenticate while silently signaling distress and triggering security alerts. The 1 Time Photo becomes invalid after a single use, resisting shoulder surfing and live coaching. These features are specifically designed for scenarios where attackers are actively coaching victims through authentication.

• User friendly and cost effective. No passwords means no resets, no help desk tickets, and no hardware tokens, reducing authentication costs. Photolok leverages the brain’s picture superiority effect for faster recall even under stress.

Because Photolok sits at the identity provider layer, it complements existing fraud analytics, transaction monitoring, and security controls.

What Security Leaders Should Do Now

1. Incorporate pig butchering into threat models and exercises. Update fraud playbooks to include scenarios where employees are groomed on personal channels before being asked to move company money or share sensitive access. Run tabletop exercises with finance, treasury, and customer success teams.

2. Map high value identity and payment paths. Identify roles that can move money, change settlement instructions, or grant high privilege access. Use that list to prioritize which users and workflows need stronger authentication first. Document how authentication works today and where scammers could realistically insert themselves.

3. Move critical flows to observation resistant authentication at the identity provider layer. Prioritize high value users and transactions. Photolok Passwordless IdP can sit in front of existing IdPs to harden sensitive paths without redesigning downstream systems.

4. Align fraud, security, and AML perspectives. Ensure teams share data and define clear triggers for escalation, such as large transfers to new counterparties combined with logins from unfamiliar devices or locations.

5. Provide targeted education for high risk staff. Pair training on scammer tactics with strong identity controls so users can ask for help without blame when something feels off.

These steps signal a shift from blaming victims to designing systems that assume sophisticated adversaries will eventually reach your people.

The Bottom Line

Pig butchering is now a major driver of global cybercrime losses. It is fueled by industrialized scam operations, cryptocurrency infrastructure, and “pig butchering as a service” offerings that let new scammers come online quickly. Scammers win when they can convince someone to send cash, share credentials, or access systems.

The strategic response is to assume some employees will be deceived and design authentication so that deception does not automatically translate into compromise. Photolok Passwordless IdP helps close that gap by turning authentication into a photo based, session specific process that gives attackers nothing to steal, copy, or exploit. It integrates with existing platforms like Okta Workforce.

Want to see how Photolok can help harden your high risk identity flows against pig butchering?

Request Your Personalized Demo

About the Author

Kasey Cromer is Director of Customer Experience at Netlok.

Sources

[1] FBI IC3. “2024 IC3 Annual Report.” ic3.gov

[2] TRM Labs. “Key Findings from the FBI’s 2024 IC3 Report.” trmlabs.com

[3] Chainalysis. “Crypto Scam Revenue 2024: Pig Butchering Grows 40% YoY.” chainalysis.com

[4] CNBC. “Crypto Scams Thrive in 2024 on Back of Pig Butchering and AI.” cnbc.com

[5] Huntress. “What Is a Pig Butchering Scam.” huntress.com

[6] US Department of the Treasury. “Treasury Takes Action Against Cyber Scam Facilitator.” treasury.gov

[7] US Department of Justice. “Largest Ever Seizure of Funds Related to Crypto Confidence Scams.” justice.gov

[8] Netlok. “How Photolok Works.” netlok.com

Kasey Cromer, Netlok | February 23, 2026

Executive Summary

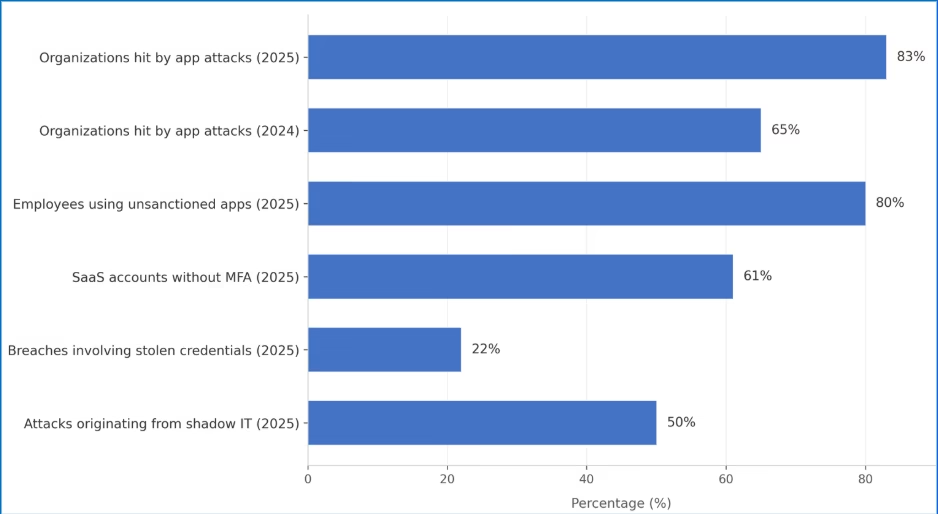

Your employees rely on dozens of mobile and desktop apps every day to collaborate, communicate, and get work done. Attackers know this. In 2025, 83% of organizations surveyed experienced app-targeted attacks, up from 65% the year before (Digital.ai). Infostealers harvested 1.8 billion credentials (DeepStrike). Importantly, 80% of employees now use apps that IT never approved (Microsoft).

The result is an authentication crisis hiding in plain sight. Every app is an entry point. Every login is a potential vulnerability. And most security tools still focus on protecting internal assets while leaving mobile, web, and desktop apps exposed to attacks that happen outside the corporate firewall.

Here’s what security leaders need to understand:

1) Apps have become the dominant attack surface. Credential theft, OAuth token abuse, and infostealer malware all target the authentication layer that apps rely on.

2) Shadow IT is no longer the exception. With employees adopting unsanctioned apps and AI tools without oversight, organizations face authentication blind spots they cannot control.

3) Photolok Passwordless IdP addresses this gap by providing identity verification that works across apps, integrates with existing systems like Okta Workforce, and gives attackers nothing to steal, intercept, or replay.

The App Attack Surface in 2026

Apps and identity now form a single, concentrated attack surface. The chart below shows how these risks converge—app-targeted attacks climbing year over year, most employees on unsanctioned apps, and a majority of SaaS accounts still running without MFA, while nearly half of attacks trace back to shadow IT.

App-Based Security Threats (2024-2025)

Apps and identity are now the primary enterprise attack surface

Source: Digital.ai, Verizon DBIR, Microsoft, SaaS Alerts, Zluri/analyst estimates (2025 data)

The rest of this article unpacks what those numbers mean operationally for your security program.

Mobile devices are no longer just communication tools. They are central to payments, identity verification, healthcare, and enterprise processes. Desktop apps handle everything from financial transactions to customer data. Each app authenticates users, often multiple times per day, creating thousands of potential entry points for attackers.

Credential Theft at Scale

The numbers are hard to ignore. DeepStrike’s 2025 analysis found that infostealer malware harvested 1.8 billion credentials in 2025 alone. These aren’t theoretical risks. Infostealers now operate as Malware-as-a-Service, with attackers deploying them through phishing emails, malicious apps, and compromised websites.

In June 2025, researchers discovered what may be the largest credential exposure in history: roughly 16 billion login credentials compiled from infostealer logs, phishing kits, and prior breaches. The Verizon 2025 DBIR found that credential stuffing now accounts for 19% of all authentication attempts at the mid-level companies, rising to 25% at large organizations.

Mobile Apps as Attack Vectors

Mobile phishing has become significantly more effective than email. Spacelift’s 2026 research found that SMS phishing achieves click rates of 19% to 36%, compared to just 2% to 4% for email—up to nine times more effective. Mobile screens unintentionally hide full URLs and security indicators, making phishing links harder to detect (Spacelift). Users move quickly on their phones. And attackers exploit urgency with messages like “Your account is locked” or “Your package is arriving.”

CompareCheapSSL reports that 63% of mobile users received a phishing SMS in the last 90 days. Malicious apps compound the problem. Researchers detected over 100,000 fake apps across major app stores in 2025 (CompareCheapSSL). These apps steal credentials, harvest data, and install backdoors that persist even after removal. And nearly a quarter of enterprise devices now host apps installed outside official app stores that bypass app store security entirely.

Shadow IT and Unsanctioned Apps

Employees aren’t being malicious—they’re trying to be productive. But every unsanctioned app introduces authentication touchpoints that IT cannot see or secure. IBM’s 2025 research found that the average enterprise uses 975 cloud services, but IT departments officially track only 108. The vast majority remain invisible to security teams. Analyst reports from Zluri and CloudEagle peg average shadow IT remediation costs above $4.2 million. Gartner predicts that by 2027, 75% of employees will use technology outside IT oversight. The perimeter has not just expanded. It has dissolved.

Shadow AI: The Newest Blind Spot

Generative AI has created a new category of shadow IT. Employees across departments use ChatGPT, Claude, DeepSeek, and other AI tools to write code, summarize data, and automate workflows. Many do so without IT approval or oversight.

Komprise’s 2025 survey of IT executives found that 90% are concerned about shadow AI from a privacy and security standpoint. Nearly 80% have already experienced negative AI-related data incidents, and 13% report those incidents caused financial, customer, or reputational harm. Between 31% and 38% of AI-using employees enter sensitive work data into AI tools. Once that data leaves the organization, it may be logged, cached, or used for model retraining—permanently outside organizational control.

The IBM Cost of a Data Breach Report found that shadow AI incidents add roughly $670,000 to the average breach cost, pushing totals to $5.11 million versus the $4.44 million global average.

This is why identity-layer controls matter: you cannot control which AI tools employees actually use, but you can govern how they authenticate to reach sensitive data in the first place.

OAuth and Third-Party App Risk

Modern apps connect to enterprise systems through OAuth tokens, API keys, and session credentials. All of these grant access without requiring passwords at each interaction. If any of these are stolen from an unapproved app, attackers can impersonate legitimate users and access corporate data without triggering authentication alerts.

Obsidian Security reports that the biggest SaaS breach of 2025 started with a compromised third-party app exploiting OAuth tokens—with a blast radius 10x greater than direct infiltration. More than 80% of apps are unfederated behind the identity provider, meaning they operate outside SSO and MFA policies. These gaps create direct paths for attackers.

Why Traditional Authentication Is Failing

These weaknesses show up first at the app layer, where users are authenticating dozens of times per day. Unfederated apps require their own passwords and credentials, separate from corporate SSO, multiplying the number of authentication touchpoints attackers can target. Every attack we’ve described exploits the same fundamental weakness: authentication systems that can be observed, intercepted, or replicated.

Passwords remain the weakest link—the majority of hacking-related breaches still involve compromised credentials somewhere in the attack chain. AI and machine learning can now generate sophisticated phishing schemes and crack passwords far more efficiently than ever before.

SMS codes can be intercepted through SIM swapping, which the FBI’s Internet Crime Complaint Center (IC3) says led to nearly 1,000 complaints and about $26 million in reported losses in 2024 alone in the U.S. Custom vishing kits can intercept one-time passwords in real time while attackers are on the phone with victims, turning a single SIM swap into a high-impact account takeover.

Passkeys offer better phishing resistance than passwords, but the private key is often stored in device managers. If the device or manager is breached, it becomes a single point of compromise.

Biometrics add convenience, but deepfakes, voice clones, and synthetic identity attacks are making biometric spoofing significantly easier. Once compromised, biometrics cannot be reset.

Why Photolok Addresses the 2026 App Security Landscape

Photolok is not another app. It is a Passwordless Identity Provider (IdP) that functions as the front door for your apps. It works with your existing systems, including Okta Workforce and other identity platforms. As an identity provider, Photolok verifies who users are before granting access to any application. By replacing passwords at this identity layer, Photolok secures authentication across every app your employees use, whether sanctioned or not. The apps themselves never see or store credentials. They simply trust Photolok’s verification.

Steganographic photo-based authentication with AES-256 encryption: Photolok’s patented system embeds encrypted codes within photos, generating a new AES-256 code every session. Users authenticate by selecting from randomized photos only they would recognize—creating verification that cannot be observed and replayed like a password, intercepted like an SMS code, or deepfaked like a biometric.

Randomized recognition challenges: Because photos are randomized every session, AI and machine learning tools have no pattern to learn and no credential to harvest. Unlike passwords or biometrics, there is no static data for attackers to brute-force or simulate.

Device approval and fingerprinting: Users control which devices can access their account. Combined with device fingerprinting, this blocks unauthorized access attempts even if attackers somehow obtain login information.

Situational security protection: Photolok is the only login method with built-in protection for high-risk situations. The Duress Photo acts as a visual silent alarm when users are coerced—whether through social engineering, insider pressure, or threats. The 1-Time Photo protects against shoulder surfing by removing itself after a single use.

User-friendly and cost-effective: Point-and-click navigation with no passwords to remember or reset. This eliminates one of the most common IT support burdens while leveraging the brain’s natural picture-superiority effect for faster, more intuitive authentication.

What Security Leaders Should Do Now

1. Map your app attack surface. Identify all mobile, desktop, and SaaS apps that employees use—sanctioned and unsanctioned. Determine which apps are federated behind your IdP and which operate outside your authentication controls.

2. Assume credentials have been compromised. With 1.8 billion credentials harvested in 2025 alone, the question is not whether your employees’ credentials are in attacker databases, but how you protect authentication when they are. Move beyond passwords and SMS codes for high-risk access.

3. Address shadow IT at the identity layer realistically. You cannot control which apps employees actually use, but you can govern how they authenticate. Solutions like Photolok Passwordless IdP secure the identity layer regardless of what apps sit on top of it.

4. Establish AI governance before shadow AI becomes a breach. Create clear policies for which AI tools are approved and how sensitive data should be handled. Monitor for unauthorized AI usage and provide approved alternatives that meet security requirements.

5. Audit third-party app connections and integrations. Review which apps have access to your enterprise systems through OAuth tokens, API keys, and session credentials. Revoke unnecessary permissions and monitor for anomalous usage.

The Bottom Line

Your workforce runs on apps. So do attackers. Every mobile app, desktop application, and SaaS tool is an authentication touchpoint that attackers can exploit. And with 80% of employees using unsanctioned apps, you cannot secure what you cannot see.

The answer is not to fight the app explosion—it is to secure the identity layer that apps depend on. Photolok Passwordless IdP secures authentication across your app ecosystem, integrates with systems like Okta Workforce, and gives attackers nothing to steal, intercept, or replay.

These attacks show up as unplanned losses, regulatory scrutiny, and board-level questions about why known identity weaknesses were not addressed sooner. The tools exist. The question is whether your organization will deploy them before or after the breach.

Want to see how Photolok can help secure your organization’s app ecosystem?

Request Your Personalized Demo

About the Author

Kasey Cromer is Director of Customer Experience at Netlok.

Sources

[1] Digital.ai. “2025 Application Security Threat Report.” digital.ai

[2] DeepStrike. “Stealer Log Statistics 2025.” deepstrike.io

[3] Verizon. “2025 Data Breach Investigations Report.” verizon.com

[4] Spacelift. “70 Social Engineering Statistics for 2026.” spacelift.io

[5] CompareCheapSSL. “Mobile Security Statistics 2026.” comparecheapssl.com

[6] Nudge Security. “Shadow IT Discovery Guide” (citing Microsoft). nudgesecurity.com

[7] Fidelis Security. “Shadow IT Risks and Detection” (citing IBM). fidelissecurity.com

[8] Zluri. “Shadow IT Statistics 2025.” zluri.com

[9] CloudEagle. “Risks of Shadow IT.” cloudeagle.ai

[10] CIO. “Shadow AI: Hidden Agents Beyond Governance.” cio.com

[11] Bright Defense. “Data Breach Statistics 2026” (citing IBM). brightdefense.com

[12] Obsidian Security. “What Is Shadow SaaS?” obsidiansecurity.com

[13] GitProtect. “Cybersecurity Statistics 2026” (citing SaaS Alerts). gitprotect.io

[14] FBI Internet Crime Complaint Center. “2024 Internet Crime Report.” ic3.gov

[15] Netlok. “How Photolok Works.” netlok.com

Kasey Cromer, Netlok | February 12, 2026

Social engineering has always exploited human psychology. In 2026, attackers have a new partner: artificial intelligence. AI-generated phishing campaigns now achieve success rates roughly four to five times higher than traditional attacks. Deepfake voice cloning requires as little as three seconds of audio. And purpose-built criminal tools can generate thousands of hyper-personalized attack messages in seconds.

Here’s what security leaders need to understand:

1) Social engineering is now cited as a leading cyber threat for 2026, with sharp year-over-year increases anticipated in the number of attempts, AI-generated campaigns, and business email compromise (BEC) losses, and 94% of businesses experienced at least one social engineering incident in 2025

2) Attackers have moved beyond email to orchestrate multi-channel campaigns combining phishing, vishing, SMS, and deepfake video across platforms like Slack, Teams, and WhatsApp

3) Traditional MFA is failing. The identity layer has become the primary battleground, and Photolok’s patented photo-based authentication addresses this gap directly with verification that AI cannot predict, clone, or replay

Key Social Engineering Metrics

| Metric | Finding | Source |

| Average cost of a phishing-driven breach | $4.88M per incident | IBM/Huntress 2025 |

| SMS phishing (smishing) vs. email phishing effectiveness | 19-36% click rate vs. 2-4% for email (up to 9x more effective) | Spacelift 2026 |

| Cloud breaches starting with compromised credentials | 46% of cloud breaches begin with stolen credentials, often obtained via social engineering | CompareCheapSSL 2025 |

| Training effectiveness (sustained vs. one-time) | One-time training reduces susceptibility by only 8%; continuous training improves effectiveness to 23% | CompareCheapSSL 2025 |

| Third-party involvement in breaches | 30% of breaches now involve third parties | Verizon DBIR 2025 |

| Small business survival rate post-breach | 60% shut down within 6 months after a major breach | Huntress 2025 |

The Five Social Engineering Trends Reshaping 2026

1. AI-Powered Hyper-Personalization at Scale

The old advice about spotting phishing (“look for spelling mistakes,” “check the tone”) is obsolete. Modern large language models produce grammatically flawless, contextually accurate messages that mirror your organization’s communication style.

According to SecurityWeek’s Cyber Insights 2026 report, attackers now use AI to scrape social media activity, job roles, company updates, and even earnings calls to generate messages that feel authentic. The result? Phishing emails that reference your recent product launch, congratulate you on a promotion, or follow up on a project you discussed publicly.

HYPR CEO Bojan Simic described the shift directly: “What once targeted human error now leverages AI to automate deception at scale. Deepfakes, synthetic backstories, and real-time voice or video manipulation are no longer theoretical; they are active, sophisticated threats designed to bypass traditional defenses and exploit trust gaps.”

What makes this particularly dangerous is scale. Attackers can now launch hyper-personalized campaigns at mass phishing volume. The economics have shifted decisively in attackers’ favor.

2. Deepfakes Move from Headlines to Standard Playbook

Deepfakes are no longer fringe tools. They’re now a scalable part of social engineering campaigns, woven across entire attack chains rather than used as isolated tricks.

The numbers tell the story: Gartner predicts that by the end of 2026, 30% of enterprises will no longer consider standalone identity verification and authentication solutions reliable in isolation. This shift reflects a stark reality: deepfake attacks bypassing biometric authentication increased 704% in 2023, and the deepfake technology has only improved since.

Real attacks are already causing real damage. In one widely cited case, a finance employee authorized a transfer of roughly $25 million after joining what they believed was a legitimate video call with their CFO. Both the likeness and voice were deepfaked. In early 2026, X-PHY CEO Camellia Chan stated that “deepfakes will become the default social engineering tool by year-end 2026.”

The barrier to entry has collapsed. Attackers now use voice cloning in phone calls with real-time synthesis that replicates an executive’s tone, cadence, and vocal signature. Short-form deepfake videos (15-30 seconds) are being embedded in WhatsApp messages and Slack channels, appearing as urgent updates from leadership.

3. Vishing and Help Desk Attacks Surge

Voice phishing (vishing) has transformed with AI voice-cloning tools. In multiple industries, vishing has replaced traditional phishing as the top social engineering threat.

In January 2026, Okta’s threat researchers warned about custom vishing phishing kits being sold on dark web forums. These kits allow attackers to control authentication flows in real-time while on the phone with victims. The attacker creates a custom phishing page, spoofs a phone number to impersonate IT help desk, and convinces targets to visit the page under pretexts like “setting up a passkey” or “verifying account security.”

The ShinyHunters cyber extortion syndicate has already claimed access to major companies through exactly this technique: vishing Okta SSO credentials. Help desk staff become the weak link when they relax verification procedures to accommodate callers who sound panicked.

These attacks succeed because caller ID is easily spoofed and still treated as partial proof of identity. High-impact actions like resetting MFA or granting access to sensitive tools go through without verification through a separate, trusted channel.

Defenses include requiring callback verification to a known number (not one provided by the caller), implementing code-based verification where the help desk provides a code the caller must retrieve from their authenticated account, and training staff that urgency is itself a red flag.

4. ClickFix: The Attack That Makes You Infect Yourself

A concerning trend has emerged rapidly through 2025 and into 2026: ClickFix attacks. These campaigns use fake CAPTCHA prompts or browser error messages to trick users into running malicious commands on their own computers.

The attack is deceptively simple. You land on a webpage showing what looks like a CAPTCHA (“Verify you are human”) or a browser error (“Update required”). The prompt tells you to press Windows+R to open the Run dialog. You’re then instructed to paste (Ctrl+V) and press Enter. What you don’t realize is that malicious code was silently copied to your clipboard when you clicked the fake prompt.

Microsoft, SentinelOne, and Proofpoint have all documented active ClickFix campaigns. The technique has been adopted by nation-state actors including Kimsuky (North Korea), MuddyWater (Iran), and APT28 (Russia). Criminal groups use it to deliver infostealers like Lumma Stealer and remote access trojans.

ClickFix works because it exploits user fatigue with anti-spam mechanisms and bypasses conventional security tools. The user executes the malware themselves, so there’s no exploit to detect.

5. Agentic AI and Multi-Channel Coordinated Attacks

Social engineering no longer arrives through a single channel, and it’s no longer manually orchestrated. Agentic AI is turning social engineering into an end-to-end automated operation, from reconnaissance to outreach to post-compromise lateral movement.

Forrester predicts that chains of specialized AI agents are emerging: some focus on reconnaissance, others craft lures, others manage infrastructure, together enabling mostly autonomous social engineering operations. Attackers now orchestrate campaigns across email, phone, SMS, and collaboration platforms simultaneously.

A common flow: an email warning about suspicious activity, followed by a vishing call to “confirm your details.” Or a convincing voice message backed up by a phishing link via SMS. If the target ignores one channel, the attacker pivots to another.

Cloud Range’s 2026 analysis found that attackers combine real user data from breaches, AI-generated personas, and automated messaging systems to deceive employees and consumers at scale. Detection and response must focus on interaction patterns, not single events.

Why Traditional MFA Is Failing

Here’s the uncomfortable truth: traditional multi-factor authentication is increasingly being defeated.

The custom vishing kits documented by Okta in January 2026 can intercept SMS or voice one-time passwords, push-based MFA, and app-based time-based one-time passwords. Because attackers can control the pages shown to targets and synchronize them with spoken instructions, they defeat MFA not resistant to phishing attacks.

The research firm Xcitium reported a 45% year-over-year rise in 2FA phishing attacks in 2025, with global damages recorded at $1.2 billion, noting that over 70% of targeted corporate attacks now involve some form of 2FA bypass.

Phishing-resistant MFA options like FIDO2/WebAuthn security keys, passkeys, and certificate-based authentication offer stronger protection. But most organizations haven’t deployed them broadly, leaving employees vulnerable to attacks that bypass what they believe is strong authentication.

Why Photolok Addresses the 2026 Threat Landscape

Every attack we’ve described exploits the same fundamental weakness: authentication systems that can be observed, intercepted, or replicated. Passwords can be phished. SMS codes can be intercepted. Push notifications can be socially engineered. Even biometrics face growing threats from deepfakes.

This is why we built Photolok at Netlok. Photolok’s patented photo-based authentication uses steganographic-coded images that randomize every session. The authentication process relies on cognitive recognition, where users select from photos only they would recognize, creating a verification method that randomizes each session, so even if observed, the selection cannot be reused by an attacker, intercepted in transit like an SMS code, or deepfaked like biometric data.

Against AI-powered social engineering: Because Photolok’s login process uses dynamic photo randomization and embedded steganographic codes, AI and machine learning tools have no pattern to learn and no credential to harvest.

Against vishing and help desk attacks: When an employee is pressured to ‘verify their identity’ over the phone, Photolok’s visual selection process resists transfer to an attacker. Even if the user describes their photo, the attacker must identify it from a randomized set of images, making accurate selection more difficult. There’s nothing to read aloud, nothing to type into a fake portal.

Against coercion scenarios: The Duress Photo feature addresses what happens when social engineering succeeds at the human level. If someone is being coerced into authenticating, whether through manipulation, insider pressure, or threats, they can select a designated photo that grants access but silently alerts security. In an era where AI makes social engineering more convincing than ever, this silent alarm provides a critical safety net.

What Security Leaders Should Do Now

1. Assume your employees will be targeted with AI-enhanced attacks. Training that focuses on spelling errors and generic greetings is obsolete. Update awareness programs to address AI-generated content, deepfake audio and video, and multi-channel attack sequences.

2. Deploy phishing-resistant authentication. Traditional MFA is no longer sufficient for high-risk roles and sensitive systems. Evaluate solutions like Photolok that resist the specific attack vectors dominating 2026: credential interception, real-time phishing proxies, and AI-powered impersonation.

3. Harden help desk and support workflows. Require callback verification to a pre-verified contact number for high-impact actions like MFA resets, password changes, and access grants. Caller ID and callback numbers should never be treated as proof of identity.

4. Implement detection for multi-channel attack patterns. Single-channel monitoring misses coordinated campaigns. Security operations should correlate suspicious activity across email, voice, SMS, and collaboration platforms.

5. Establish verification protocols for financial transactions. Any request involving wire transfers, payment changes, or sensitive data should require confirmation through a channel the attacker cannot control.

6. Brief leadership on the AI-enhanced threat landscape. Social engineering losses are a board-level issue. Ensure executives understand that the attacks of 2026 look nothing like the phishing emails they remember.

The Bottom Line

Social engineering has always been about exploiting human trust. In 2026, AI has made that exploitation faster, more convincing, and infinitely scalable. Attackers can clone voices from seconds of audio, generate thousands of personalized attack messages instantly, and orchestrate multi-channel campaigns that adapt in real time.

These attacks show up as unplanned losses, regulatory scrutiny, and board-level questions about why known identity weaknesses were not addressed sooner. They are not just IT incidents; they are enterprise risk events.

The organizations that will avoid becoming the next cautionary tale are those investing in authentication that cannot be socially engineered: systems where there’s nothing to intercept, nothing to replay, and nothing an AI can learn to predict.

Photolok addresses this reality directly. When the attack exploits human psychology, the defense must go beyond human vigilance.

The tools exist. The question is whether your organization will deploy them before or after the breach.

Want to see how Photolok can help secure your organization against AI-powered social engineering?

Request Your Personalized Demo

About the Author

Kasey Cromer is Director of Customer Experience at Netlok.

Sources

[1] ZeroFox Intelligence. “2026 Cyber Threat Predictions and Recommendations.” December 2025. https://www.zerofox.com/blog/2026-cyber-threat-predictions/

[2] SecurityWeek. “Cyber Insights 2026: Social Engineering.” January 2026. https://www.securityweek.com/cyber-insights-2026-social-engineering/

[3] Cloud Range. “5 Key Social Engineering Trends in 2026.” January 2026. https://www.cloudrangecyber.com/news/5-key-social-engineering-trends-in-2026

[4] Hoxhunt. “Vishing Attacks Surge 442%.” December 2025. https://hoxhunt.com/blog/vishing-attacks

[5] Help Net Security. “Okta Users Under Attack: Modern Phishing Kits Are Turbocharging Vishing Attacks.” January 2026. https://www.helpnetsecurity.com/2026/01/23/okta-vishing-adaptable-phishing-kits/

[6] BetaNews. “AI as a Target, Web-Based Attacks and Deepfakes: Cybersecurity Predictions for 2026.” January 2026. https://betanews.com/2025/12/22/ai-as-a-target-web-based-attacks-and-deepfakes-cybersecurity-predictions-for-2026/

[7] Keepnet Labs. “250+ Phishing Statistics and Trends You Must Know in 2026.” January 2026. https://keepnetlabs.com/blog/top-phishing-statistics-and-trends-you-must-know

[8] Keepnet Labs. “Deepfake Statistics and Trends 2025.” November 2025. https://keepnetlabs.com/blog/deepfake-statistics-and-trends

[9] DeepStrike. “Deepfake Statistics 2025: AI Fraud Data and Trends.” September 2025. https://deepstrike.io/blog/deepfake-statistics-2025

[10] Hoxhunt. “Business Email Compromise Statistics 2026.” January 2026. https://hoxhunt.com/blog/business-email-compromise-statistics

[11] FBI IC3. “Business Email Compromise: The $55 Billion Scam.” September 2024. https://www.ic3.gov/PSA/2024/PSA240911

[12] Abnormal AI. “Threat Report: BEC and VEC Attacks Show No Signs of Slowing.” November 2025. https://abnormal.ai/blog/bec-vec-attacks

[13] Microsoft Security Blog. “Think Before You Click(Fix): Analyzing the ClickFix Social Engineering Technique.” August 2025. https://www.microsoft.com/en-us/security/blog/2025/08/21/think-before-you-clickfix-analyzing-the-clickfix-social-engineering-technique/

[14] Proofpoint. “ClickFix Social Engineering Technique Floods Threat Landscape.” February 2025. https://www.proofpoint.com/us/blog/threat-insight/security-brief-clickfix-social-engineering-technique-floods-threat-landscape

[15] SentinelOne. “Caught in the CAPTCHA: How ClickFix Is Weaponizing Verification Fatigue.” May 2025. https://www.sentinelone.com/blog/how-clickfix-is-weaponizing-verification-fatigue-to-deliver-rats-infostealers/

[16] Xcitium Threat Labs. “Unmasking Sneaky 2FA: How Modern Phishing Kits Bypass MFA in 2026.” January 2026. https://threatlabsnews.xcitium.com/blog/unmasking-sneaky-2fa-how-modern-phishing-kits-bypass-mfa-in-2026/

[17] Jericho Security. “Voice Phishing Is Rising: Why ‘Just a Phone Call’ Is Now a Real Threat.” February 2026. https://www.jerichosecurity.com/blog/voice-phishing-vishing-prevention

[18] Netlok. “How Photolok Works.” 2025. https://netlok.com/how-it-works/

[19] Spacelift. “Social Engineering Statistics.” 2025. https://spacelift.io/blog/social-engineering-statistics

[20] Forrester. “Predictions 2026: Cybersecurity and Risk.” October 2025. https://www.forrester.com/blogs/predictions-2026-cybersecurity-and-risk/

[21] Huntress. “Impact of Social Engineering: Key Statistics on Businesses.” 2025. https://www.huntress.com/social-engineering-guide/impact-of-social-engineering-key-statistics-on-businesses

[22] CompareCheapSSL. “100+ Social Engineering Statistics in 2025.” December 2025. https://comparecheapssl.com/100-social-engineering-statistics-in-2025-the-latest-stats-and-trends-revealed

[23] Keepnet Labs. “Security Awareness Training Statistics.” January 2026. https://keepnetlabs.com/blog/security-awareness-training-statistics

Kasey Cromer, Netlok | January 27, 2026

Autonomous AI agents are now executing code, authorizing payments, and modifying systems across enterprise environments. According to PwC’s 2025 AI Agent Survey, 79% of organizations are already adopting AI agents, with Gartner predicting that 40% of enterprise applications will feature task-specific AI agents by the end of 2026 (up from less than 5% in 2025). The transformation is happening at unprecedented speed, and most organizations are deploying these systems without the governance frameworks needed to prevent systemic failures, regulatory violations, board-level accountability crises, and missed ROI targets. MIT research found that 95% of enterprise generative AI projects fail to deliver measurable financial returns, often because of inadequate governance and poor data foundations.

Here’s what security leaders need to know:

1) OWASP released its first Top 10 for Agentic Applications in December 2025, identifying critical risks from goal hijacking to rogue agents that security teams must address immediately.

2) Forrester predicts that agentic AI will cause a major public breach in 2026, with consequences severe enough to result in employee dismissals.

3) The identity layer is the critical control point, and Photolok’s patented photo-based authentication addresses this gap directly, replacing vulnerable credentials with dynamic, steganography-powered verification that AI cannot predict, harvest, or replay.

The Numbers That Define 2026

| Metric | Finding | Source |

| Companies with AI agents in production | 57% of enterprises surveyed | G2 August 2025 |

| Practitioners citing security as top AI agent challenge | 62% of AI practitioners surveyed | Warmly Research 2025 |

| Vulnerable agent framework components identified | 43 distinct components compromised via supply chain | Stellar Cyber 2025 |

What Makes Agentic AI Different (and Dangerous)

If you’ve been following AI developments, you might think this is just another incremental step. It’s not. The shift from generative AI to agentic AI represents a categorical change in risk.

Here’s the difference: A standard large language model generates content like text or code. An agentic AI takes that several steps further. It uses tools, makes decisions, and performs multi-step tasks autonomously in digital or physical environments. It doesn’t just talk; it does.

Think about what that means in practice. As an example, an AI agent handling procurement can autonomously negotiate with suppliers, issue purchase orders, and authorize payments. A customer service agent can access customer records, modify accounts, and execute transactions. A security operations agent can respond to alerts, quarantine systems, and modify access controls.

When software can make decisions and act on its own, security strategies must shift from static policy enforcement to real-time behavioral governance. The OWASP GenAI Security Project put it directly: “Once AI began taking actions, the nature of security changed forever.”

The OWASP Top 10 for Agentic Applications: Your New Security Framework

In December 2025, OWASP released the first comprehensive security framework specifically designed for autonomous AI systems. This framework names the ten most critical security risks for autonomous/agentic AI systems and gives high-level guidance to mitigate them. It is meant as the “field manual” for securing Al agents that can plan, act, use tools, and make decisions across workflows, similar in spirit to the classic OWASP Top 10 for web apps but focused on agentic Al. Developed with input from over 100 security researchers and providers including AWS and Microsoft, the Top 10 for Agentic Applications was built from real incidents – confirmed cases of data exfiltration, remote code execution, memory poisoning, and supply chain compromise.

The 10 risks (at a glance)

The Shadow Agent Problem Nobody Wants to Talk About

Remember shadow IT? We’re seeing the exact same pattern with AI agents now, except the stakes are exponentially higher.

According to Omdia research, while many enterprises deploy AI agents within controlled environments like Salesforce Agentforce, the real value comes from touching core applications and processes. That’s also where significant cyber risk lives. Employees are connecting AI tools to company systems without IT oversight, development teams use AI coding assistants with broad repository access, and business units deploy automation agents with excessive privileges. Each of these creates unvetted identity providers and data paths that exist entirely outside normal IAM controls.

The Barracuda Security report from November 2025 identified 43 agent framework components with embedded vulnerabilities via supply chain compromise. Researchers have already discovered malicious MCP (Model Context Protocol) servers in the wild. MCP servers are essentially plug-in tools that extend what agents can do, and a compromised one gives attackers direct access to agent capabilities. One malicious package impersonated a legitimate email service but secretly forwarded every message to an attacker. Another contained dual reverse shells. Any AI agent using these tools was unknowingly exfiltrating data or providing remote access.

Why Traditional Authentication Fails the Agentic AI Challenge

Every security incident I’ve described, whether its goal is hijacking, privilege escalation, or rogue agents, eventually comes down to identity and access. Traditional authentication wasn’t designed for a world where autonomous systems need verified identities, where the line between human and machine actors blurs, and where attackers use AI to generate convincing impersonations at machine speed.

For internal use (protecting team members): How do you ensure the person authorizing an agent’s action is actually who they claim to be? How do you detect coercion? For example, traditional passwords are vulnerable to phishing, and AI now generates sophisticated social engineering attacks.

For customer-facing applications: When AI agents handle customer interactions, how do you verify identity without friction? Biometrics face growing threats from deepfakes that convincingly impersonate real people.

For agent-to-system authentication: As Salesforce’s Model Containment Policy emphasizes, AI models must be granted only the minimum necessary capabilities. Enforcing this requires robust authentication at every access point, something static credentials cannot provide.

What is the solution?

This is why we built Photolok at Netlok. Passwords and static one-time codes were designed for human logins in a pre-cloud, pre-agentic AI world, not for autonomous systems making thousands of decisions at machine speed. Photolok’s patented photo-based authentication uses steganography-coded images that randomize every session, creating a verification method designed for a world where attackers use AI to harvest, guess, and replay credentials at machine speed.

For internal teams: Photolok’s intuitive selection process, where users recognize and select their login photos, cannot be replicated by AI or automated systems. When an employee authorizes a sensitive agent action, you have high confidence it’s actually them.

The Duress Photo feature addresses scenarios after security tools are ignored. If someone is being coerced into approving an agent’s action, whether through social engineering, physical threat, or insider pressure, they can select a designated photo that grants access but silently alerts security. This applies not just to physical coercion but also to high-stakes financial approvals, privileged access changes, and any agent-executed transaction where verification matters. In an era where AI agents execute transactions in milliseconds, this silent alarm could prevent catastrophic damage.

Against AI-powered attacks: Because Photolok’s login process uses dynamic photo randomization and embedded steganographic codes, AI/ML tools have minimal attack surface.

Learning from Enterprise AI Governance: The Salesforce Model

Salesforce’s approach to agentic AI security, documented in their Model Containment Policy and AI Acceptable Use Policy, provides a blueprint every organization should study. Their core principle: “The model reasons; the platform decides.” LLMs provide language intelligence, but configuration provides authority, safety, and accountability.

Key governance requirements:

No Autonomous Authority: AI models may recommend, summarize, classify, or assist, but must not make final decisions with legal, financial, safety, or rights-impacting consequences. Final authority must reside with a human or deterministic system.

Deterministic Control Over Probabilistic Behavior: Critical behaviors must be enforced outside the model. Routing, permissions, approvals, and enforcement must not rely on model judgment. Prompts may guide behavior but must never be the sole enforcement mechanism.

Human-in-the-Loop Requirements: Human review is mandatory when AI output affects individuals’ rights, is used in regulated domains, is externally published, or supports high-risk decisions.

No Self-Expansion: AI models must not modify their own instructions, permissions, or scope; generate or deploy new tools; escalate privileges; or chain actions indefinitely without external limits.

These aren’t optional guidelines. They’re foundational requirements for safe AI agent deployment.

The Real Cost of Waiting

The organizations deploying AI agents today face a choice: implement proper governance now, or scramble to explain failures later.

Omdia analyst Todd Thiemann’s prediction for 2026 is blunt: “Some early AI agent deployments will get pushed into production with inadequate QA testing, insufficient security guardrails, or an over-permissioned agent, and we will start to see mischief involving AI agents. I expect 2026 will see AI agents touching core business processes, and some high-profile data breaches and fraud originating from those AI agents.”

Forrester’s prediction is even more direct: Agentic AI will cause a major public breach in 2026 that will lead to employee dismissals. When that breach happens, expect board investigations, regulatory scrutiny over data protection and financial controls, and serious questions about executive accountability. The fallout won’t be limited to IT departments.

The question isn’t whether your organization will face these risks. It’s whether you’ll be ready when they arrive.

What Security Leaders Should Do Now

1. Audit your AI agent exposure. Find out what agents your organization is actually using, what systems they can access, and what actions they can take. You can’t secure what you don’t know about.

2. Implement the principle of least agency. Every AI agent should have minimum autonomy required. Review and restrict agent permissions aggressively. Require human approval before agents can execute financial transactions, modify access controls, delete data, or take any action that cannot be easily reversed.

3. Establish deterministic controls for critical decisions. Don’t rely on prompts (the natural language instructions that tell AI agents what to do and how to behave) for security enforcement. Build guardrails into your architecture that cannot be bypassed through prompt manipulation.

4. Rethink authentication for the agentic era. Evaluate modern alternatives like Photolok that resist AI-powered attacks and provide verification that autonomous systems cannot fake.

5. Build strong observability. Implement comprehensive logging of agent actions. Monitor for behavioral anomalies. Create kill switches for rapid disabling.

6. Brief your leadership. AI agent security is a governance issue. Ensure your board of directors understands the stakes before an incident forces that conversation.

The Bottom Line

The enterprise AI landscape in 2026 is moving faster than most security frameworks can adapt. AI agents are no longer experiments. They’re production systems with real permissions and real consequences. The organizations that act now to implement proper governance, authentication, and observability will be the ones that capture the value of agentic AI without becoming the next cautionary tale. This will also provide protection of financial results and preserve ROI.

The OWASP Agentic Top 10 gives us a framework. Enterprise policies like Salesforce’s provide governance blueprints. Modern authentication like Photolok addresses the identity challenges that traditional methods cannot solve.

The tools exist. The question is whether your organization will use them before or after the breach.

Photolok Offer

Want to see how Photolok can help secure your organization’s AI-powered future? Request Your Personalized Demo Today.

About the Author

Kasey Cromer is Director of Customer Experience at Netlok.

Sources

[1] PwC. “AI Agent Survey.” May 2025. https://www.pwc.com/us/en/tech-effect/ai-analytics/ai-agent-survey.html

[2] Gartner. “Gartner Predicts 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026.” August 2025. https://www.gartner.com/en/newsroom/press-releases/2025-08-26

[3] OWASP GenAI Security Project. “OWASP Top 10 for Agentic Applications for 2026.” December 2025. https://genai.owasp.org/resource/owasp-top-10-for-agentic-applications-for-2026/

[4] Forrester. “Predictions 2026: Cybersecurity and Risk.” October 2025. https://www.forrester.com/blogs/predictions-2026-cybersecurity-and-risk/

[5] Stellar Cyber. “Top Agentic AI Security Threats in 2026.” December 2025. https://stellarcyber.ai/learn/agentic-ai-securiry-threats/

[6] G2. “Enterprise AI Agents Report: Industry Outlook for 2026.” December 2025. https://learn.g2.com/enterprise-ai-agents-report

[7] CyberArk. “AI Agents and Identity Risks: How Security Will Shift in 2026.” December 2025. https://www.cyberark.com/resources/blog/ai-agents-and-identity-risks-how-security-will-shift-in-2026

[8] BleepingComputer. “The Real-World Attacks Behind OWASP Agentic AI Top 10.” January 2026. https://www.bleepingcomputer.com/news/security/the-real-world-attacks-behind-owasp-agentic-ai-top-10/

[9] Omdia/Dark Reading. “Identity Security 2026: Predictions and Recommendations.” January 2026. https://www.darkreading.com/identity-access-management-security/identity-security-2026-predictions-and-recommendations

[10] SecurityWeek. “Rethinking Security for Agentic AI.” January 2026. https://www.securityweek.com/rethinking-security-for-agentic-ai/

[11] Cloud Security Alliance. “Top 10 Predictions for Agentic AI in 2026.” January 2026. https://cloudsecurityalliance.org/blog/2026/01/16/my-top-10-predictions-for-agentic-ai-in-2026

[12] McKinsey. “The State of AI in 2025: Agents, Innovation, and Transformation.” November 2025. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

[13] Salesforce. “Artificial Intelligence Acceptable Use Policy.” December 2025. https://www.salesforce.com/company/legal/agreements/

[14] Salesforce. “Model Containment Policy.” January 2026.

[15] Netlok. “How Photolok Works.” 2025. https://netlok.com/how-it-works/

[16] MIT/CIO. “2026: The Year AI ROI Gets Real.” January 2026. https://www.cio.com/article/4114010/2026-the-year-ai-roi-gets-real.html

[17] Astrix Security. “The OWASP Agentic Top 10 Just Dropped: Here’s What You Need to Know.” December 2025. https://astrix.security/learn/blog/the-owasp-agentic-top-10-just-dropped-heres-what-you-need-to-know/

[18] Giskard. “OWASP Top 10 for Agentic Applications 2026: Security Guide.” December 2025. https://www.giskard.ai/knowledge/owasp-top-10-for-agentic-application-2026

[19] Palo Alto Networks. “OWASP Top 10 for Agentic Applications 2026 Is Here.” December 2025. https://www.paloaltonetworks.com/blog/cloud-security/owasp-agentic-ai-security/

[20] ActiveFence. “OWASP Top 10 for Agentic AI.” December 2025. https://www.activefence.com/blog/owasp-top-10-agentic-ai/